OR-Tools – Optimize Your Company’s Workflows

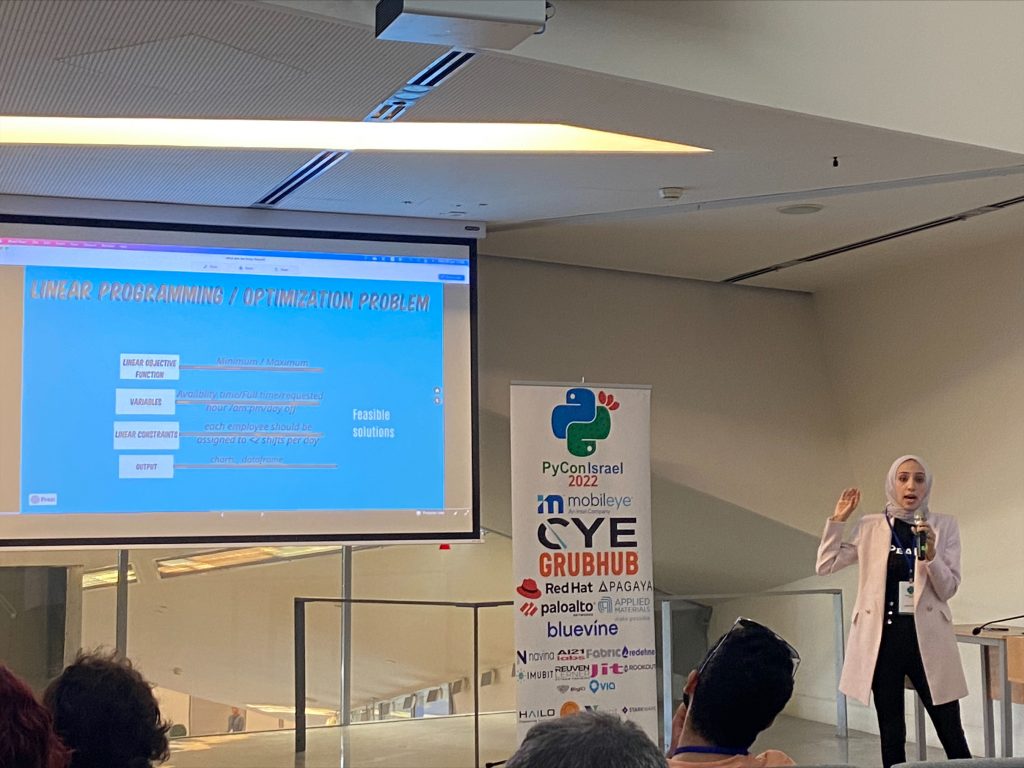

If you read my summary of PyCon Israel day 2 you would know that my favorite lecture over the two days was one that used OR-Tools to solve some scheduling issues the presenter was facing at her company. Aside from it being a great lecture, lightbulbs immediately went off in my head. Could this package solve issues faced by companies around the world?

What is OR-Tools?

The OR-Tools package solves several different types of combinatorial optimization problems. While I knew these types of problems existed, I never really spent much time thinking about them. Even less had I thought that there was software that would make solving these problems on a grand scale as easy as just writing a few lines of code. The OR-Tools package has code to solve the following types of problems:

- Linear optimization

- Constraint optimization

- Mixed-integer optimization

- Bin packing

- Network flows

- Assignment

- Scheduling

- Routing

Now that we know some of the things that OR-Tools can help with, let’s see what I think are its major strengths compared to traditional machine learning.

Why do I find OR-Tools so fascinating?

Ever since I read “Cheaper by the Dozen” in elementary school, I have been kind of obsessed with finding the most optimal solution to tasks I face in my daily life. (No, I don’t button my shirts from the bottom up). I’m the kind of person who organizes their shopping lists by how items are laid out in the store. I also try to group my errands together and not pass by the same place twice. When I was in the dating period of my life, I joked with my friends about an ACPD (average cost per date) metric, which I assumed was somehow correlated to the prospects of actually marrying the young woman (study in progress). So yeah, while I may not have known it, optimization has had a special place in my heart for a while (I lost track of the APCD for optimization).

OR-Tools – ML without Mounds of Data

Since learning of OR- Tools two weeks ago, I’ve crawled down various rabbit holes of the combinatorial optimization world. I have watched a few graduate-level classes from a course uploaded to YouTube during Corona, as well as another more basic college course (which seemed to have been edited by AI to remove all the dead air making it very choppy). I also stumbled upon a YouTube video from Barry Stahl called Building AI Solutions with Google OR-Tools. He is clear and gives useful examples. This has been the best video I have seen on the subject so far and is definitely worth a watch.

But what did he say that was so revelatory for me? Among all the other great things he says, he mentions something along these lines: “Many machine learning models require mounds of data to give you the best result. But optimization problems aim to solve them given the only the data that is presented.” What he means is that if there is a way to make a formula out of your problem before analyzing your mounds of data, you may want to try that first, especially if you have nothing else to go on. You can optimize your profits with your inventory, only needing to know your profit margins, regardless of historical performance (though it helps). Or you can get your teachers and students assigned to their classes each semester without hours of moving around notecards.

As a side note, the lecture also explained why I have ALWAYS had an issue solving Sudokus. On the surface, this kind of puzzle seems like something that would be perfect for my interests. After all, it is a complex problem that needs solving. But, as Barry explained, it is IMPOSSIBLE to validate all the possible combinations. Instead, if you approach it as a “constraints” problem, solving them becomes that much easier. If I ever get a few hours, I may even try and program a Sudoku solver, then I can claim that I have mastered the puzzle.

Conclusion

I still have a lot to learn about OR-Tools and this area of math. The great thing is that OR-Tools and other similar packages have a robust support community around them. As I plan to start a few projects that will leverage OR-Tools, I look forward to both learning from them and helping you, my readers, on your journey through ML.